- HubPages»

- Technology»

- Computers & Software»

- Computer Software»

- Digital Photography & Video

Do More Megapixels Mean Better Photo Quality?

Smartphone makers are involved in the constant battle of providing cutting-edge technologies with pocket-friendly price tags, and this battle has given us ample of options that we couldn’t think of a decade ago.

In this relentless attempt at luring potential customers, the manufactures sometimes targeted only on-paper specifications and ‘megapixels’ is a fine example of this effort.

In the year 2013, the megapixels debate was quite hot as the year was seeing some heated-up discussions over the size of the smartphone and their seemingly phony claims at megapixels count but the question is, do megapixels count?

Yes, they do, but the overall quality of any digital image is the cumulative effort of many other parameters such as sensor size, image processing etc.

Sensor Size vs. Megapixels

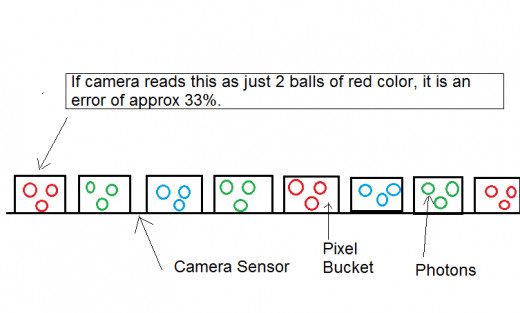

Increasing megapixels is of less importance if the camera’s sensor size remains the same. For collecting light, sensors have buckets known as pixels; there are millions of them packed onto its surface.

Since smartphones are much smaller and significantly lighter than a typical dSLR, fitting a big sensor is a costly and a little impractical because of the dimensions of a phone.

When manufacturers claim an affordable smartphone with a 13 or 21 Megapixels camera they are not lying. They are just saying it the other way round that they have crammed 13 or 21 million pixels on a small sensor size. And the result – an annoying component called noise gets added to the photographs. This noise occurs due to inability of light detectors to count the right amount of the colors of light that are being collected by photosites.

(Photosites in sensors are not exactly same as pixels, but for the beginner’s point of view it is important to note that the words can be used interchangeably with reference to collection of light or photons.)

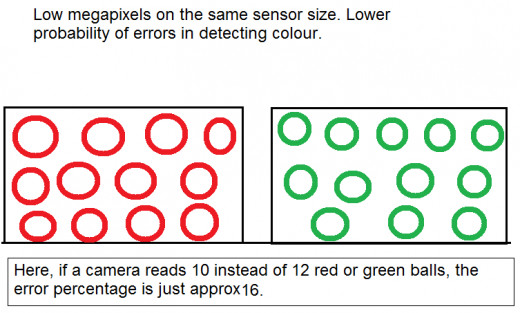

In simple words, the crammed the pixels, the harder it is for camera to detect correct light intensity and colour. On the other hand, when the same number of pixels are contained by a bigger sensor, the pixels are spacious and are collecting more light and the percentage of chances of errors of misinterpretation dips.

The Classic Example

Here’s a classic bucket example where pixels are treated as buckets, each collecting a specific primary color. These colors are read by camera.

In the above example, sensor size is same but only with larger buckets (means low but spacious pixels). The percentage of error dipped to 16%.

Note: All photons are of same size

And hence if you are going after a cheap smartphone boasting huge megapixels, keep an eye on its sensor size.

Image Processing

The other important factor is “Image Processing”. A few months ago, BlackBerry released an update for its 10 OS. The important feature of the update was a better low-light photography experience. Some might think how could the quality of an image be enhanced while keeping the hardware same. Here comes another factor called ‘Image Processing’.

There is a considerable lag between the time you click the photograph and the time you see it on your display screen. This time is taken by your camera to process the image.

Keeping the hardware absolutely identical to its counterpart, a manufacturer can make a camera produce better quality image by improving upon the image processing.

Lens

Lenses are a close friend of sensors. While sensors, in raw terms are light-absorbing elements, what sends the lights in the camera correctly and in orderly manner is lens. Its architecture is complex, and hence they are costly.

Smartphones are getting thinner and fitting in a lens that is as complicated as that of a dSLR, is impossible. That being said, some smartphone cameras as getting increasingly capable at snapping vibrant images without much of noise or blur.

Noise

As already discussed, noise is the aberrations that are caused when light detectors are not able to read the right color intensity and this case is frequent if pixels are squeezed and fighting for space with neighbouring pixels.

There are other ways by which noise seeps in. You must have noticed the grains in the pictures clicked indoors and at night. This mainly results from high value of ISO. ISO is the number that tells the sensitivity of sensor for light. Higher the ISO, higher is the sensitivity and chances of misrepresentation of colors, and hence this results into noise.

Grains are obvious when we zoom in the image.

There are desktop based software such as Adobe Lightroom that does a good job of minimizing noise to considerable levels but using Adobe products demands a bit of sweat.

If you are not a professional photographer, it is worth dedicating hours to lessen grains from any image. You are better off using any Android or iOS based noise remover app such as Systweak Photo Noise Reducer Pro (available for Mac and Android platform).

This photo noise reduction app is tap-and-ready type i.e. you won’t need any technical assistance. Just select a mode of noise removal to reduce noise in photos and you’re done.

Final Words

Never judge a book by its cover, and never judge a camera by its megapixels. Lens and image processing of a camera device can be better understood by some honest reviews that can be found on internet. Take into account the user reviews and image samples as well. Go for branded smartphones or dSLR if you want to translate on-paper promises to real world delights. Make a smart choice.